The Emailable Blog

Thoughts, stories and ideas to boost your email marketing ROI

- All

- February 2024

- December 2023

- September 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- March 2021

- November 2019

- August 2019

- July 2019

- June 2019

-

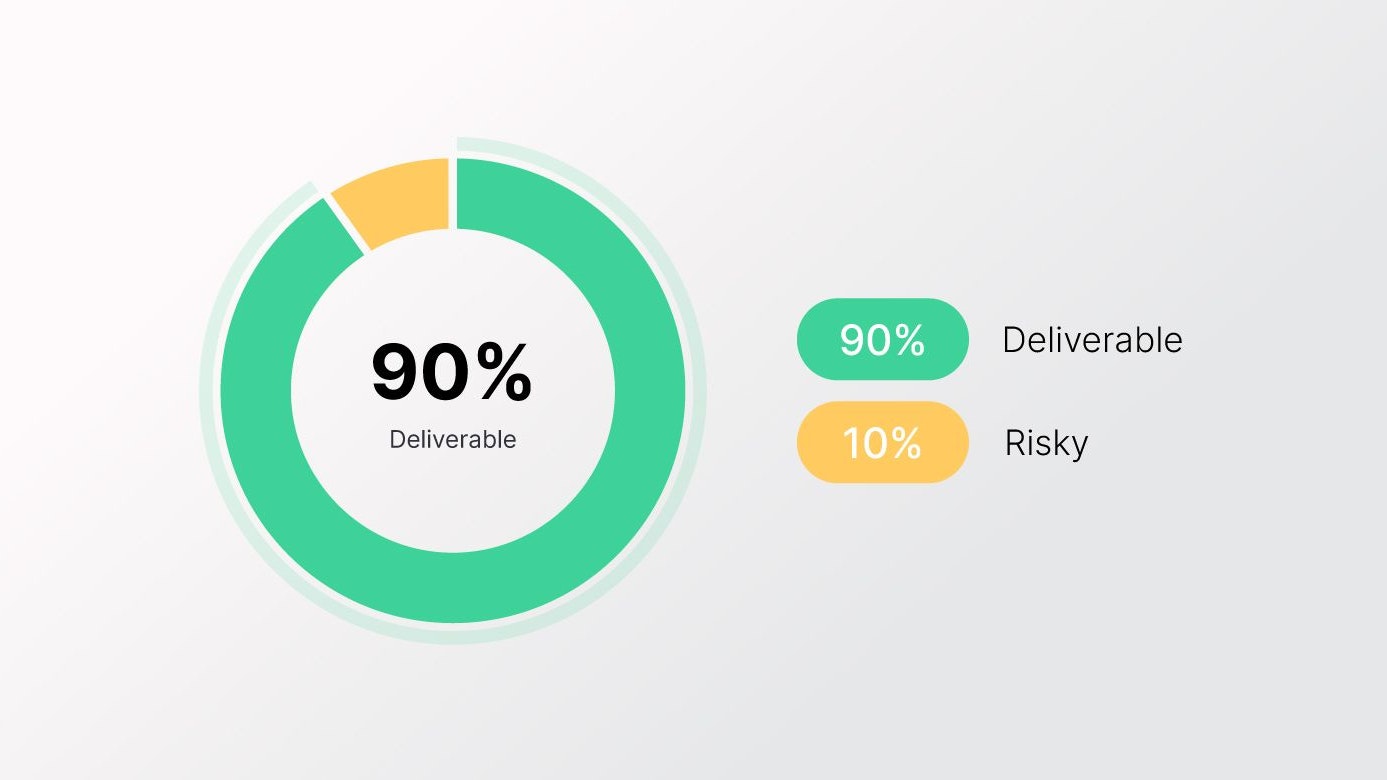

What does a healthy email list look like?

You can measure email list health by comparing your campaign performance with industry benchmarks. Although this gives you a rough idea...

You can measure email list health by comparing your campaign performance with industry benchmarks. Although this gives you a rough idea of where you are, it doesn’t tell you where the bigger problem is or how to solve it. This is where a service like Emailable comes in...

Leana Yang February 9, 2024 • 1 min read

Leana Yang February 9, 2024 • 1 min read -

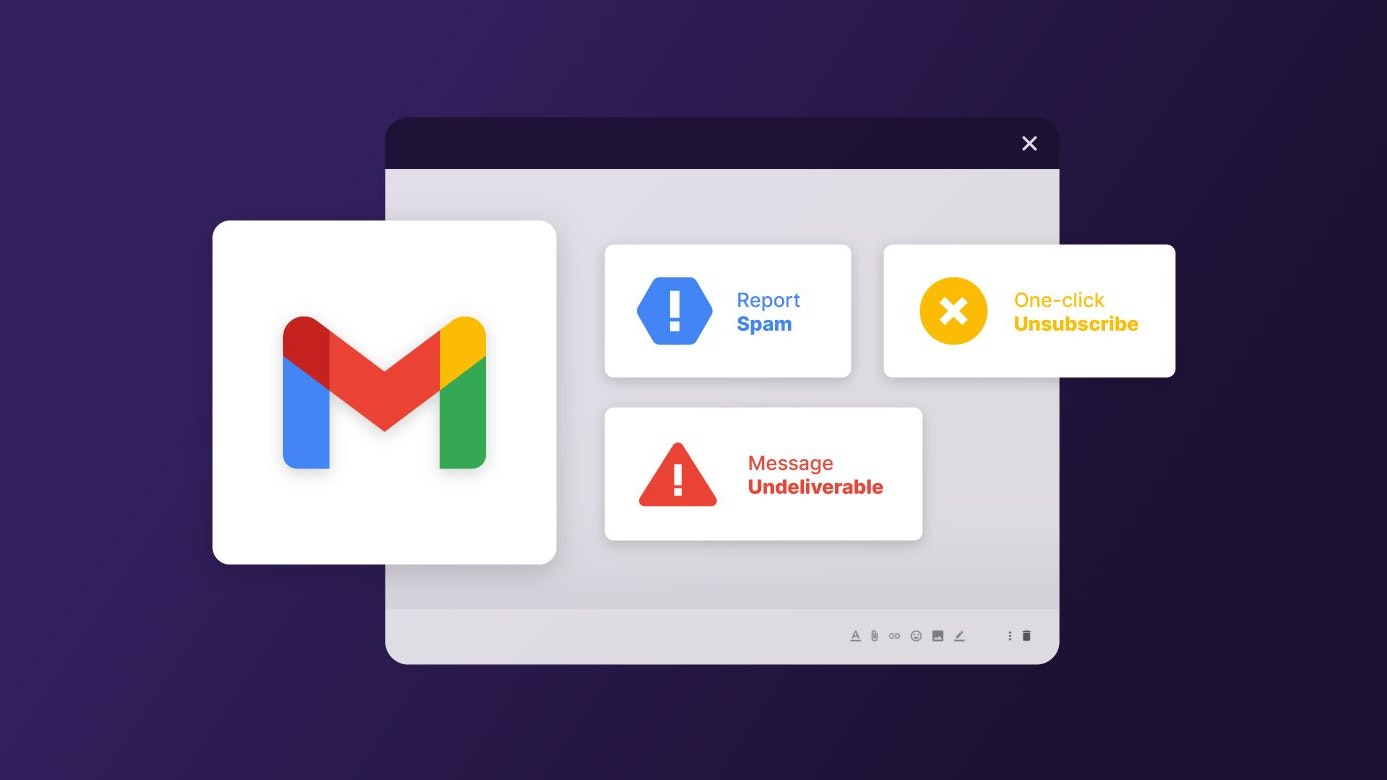

New Requirements for Gmail and Yahoo Bulk Senders

Gmail and Yahoo have recently added monumental policy updates for bulk senders. Here’s everything you need to know about the new...

Leana Yang December 14, 2023 • 3 min read

Leana Yang December 14, 2023 • 3 min read -

How Yahoo Emails Impact Deliverability and Sender Reputation

Over the past decade, Yahoo Mail's policies have evolved. More recently, this directly impacts the ability to classify their email...

Leana Yang September 7, 2023 • 1 min read

Leana Yang September 7, 2023 • 1 min read -

Email Deliverability: Common Challenges and How to Overcome Them

Picture this: You've just poured your heart and soul into creating an email campaign that you're certain will blow your audience away...

Fernanda Moura June 26, 2023 • 3 min read

Fernanda Moura June 26, 2023 • 3 min read -

How to Increase Brand Awareness Using Email Marketing?

Hey there, fellow marketer! Are you struggling to get your brand in front of your target audience? Do you feel like your marketing...

Fernanda Moura June 21, 2023 • 3 min read

Fernanda Moura June 21, 2023 • 3 min read -

Reducing Bounce Rates: How Email Verification Improves Retail Campaign Performance

Email verification plays a crucial role in reducing bounce rates by identifying and removing invalid or non-existent email addresses...

Kat Garcia June 15, 2023 • 4 min read

Kat Garcia June 15, 2023 • 4 min read -

5 Steps for Successful Lead Generation Strategy

The ability to attract and convert prospects is what sets apart businesses paving the way forward and those left in the dust. A...

Kat Garcia June 12, 2023 • 6 min read

Kat Garcia June 12, 2023 • 6 min read -

5 Effective Email Marketing Strategies to Generate More Sales

Email marketing has become one of the most effective ways of digital marketing and has strengthened its position rapidly. Email users...

Luiza Zeccer June 7, 2023 • 5 min read

Luiza Zeccer June 7, 2023 • 5 min read -

10 Email Marketing Skills to Learn

A lot has changed since the global pandemic, jobs, careers, and work priorities have shifted quite a lot and the move towards remote...

Luiza Zeccer June 2, 2023 • 8 min read

Luiza Zeccer June 2, 2023 • 8 min read -

The Importance of Email Verification in Retail: Ensuring Deliverability and Improving ROI

In today’s digital age, email marketing has emerged as a powerful tool for businesses, no matter the niche you work in. Email...

Kat Garcia May 22, 2023 • 6 min read

Kat Garcia May 22, 2023 • 6 min read -

Healthy Email List Increases Revenue for Retailers

Do you think it’s a lot of work to maintain your email list healthy and clean? We know how overwhelming it can be when operating costs...

Fernanda Moura May 16, 2023 • 4 min read

Fernanda Moura May 16, 2023 • 4 min read -

How To Optimize Touchpoints And Build A Loyal Customer Base

In the ever-evolving world of business, customer loyalty has always been critical. Since customers have a wide selection of offers to...

Luiza Zeccer May 10, 2023 • 8 min read

Luiza Zeccer May 10, 2023 • 8 min read -

How to Create a Killer Welcome Email Series That Converts Subscribers into Customers

Most email marketers are focused on building long and robust email lists by offering different lead magnets and other enticing...

Kat Garcia April 20, 2023 • 8 min read

Kat Garcia April 20, 2023 • 8 min read -

How to Leverage the Power of Email Automation to Streamline Your Marketing Efforts

It’s inevitable, in every marketer’s career, at some point it feels like there are just not enough hours in a day to get what needs to...

Kat Garcia April 17, 2023 • 10 min read

Kat Garcia April 17, 2023 • 10 min read -

A Beginner's Guide to Email Marketing: Everything You Need to Know to Get Started

Welcome! Here, we will guide you through the best practices for email marketing. Email marketing is one of the oldest forms of digital...

Kat Garcia April 11, 2023 • 8 min read

Kat Garcia April 11, 2023 • 8 min read -

10 Components of a Successful Marketing Email

Email marketing has proven to be one of the most effective tools for businesses to reach and engage with their target audience...

Luiza Zeccer April 7, 2023 • 7 min read

Luiza Zeccer April 7, 2023 • 7 min read -

The Role of Email Marketing in Customer Retention: Why It Matters and How to Do It Right

In today’s fast-paced and competitive sales world, customer retention is more important than ever, right? Since we all know that it is...

Fernanda Moura April 5, 2023 • 2 min read

Fernanda Moura April 5, 2023 • 2 min read -

How Do I Clean My Email List?

ny business, regardless of its size, has email lists that they are not sure if they are valid or not. And today, we’re going to show you...

Fernanda Moura March 30, 2023 • 4 min read

Fernanda Moura March 30, 2023 • 4 min read -

Why Is Email Follow-up Important For Your Marketing Campaigns?

Email marketing consists of many levels. To get started you would send out cold emails, where it’s the first time you communicate with...

Kat Garcia March 27, 2023 • 6 min read

Kat Garcia March 27, 2023 • 6 min read -

How To Use Email As A Team Collaboration Tool

There are several practical ways of ensuring your business enterprise continues to grow. One of them is by boosting team collaboration...

Luiza Zeccer March 23, 2023 • 8 min read

Luiza Zeccer March 23, 2023 • 8 min read -

How to Re-Engage Your Email Subscribers

Every marketing team should know how valuable their email marketing strategies are. Many have worked tirelessly to build an email list...

Kat Garcia March 21, 2023 • 7 min read

Kat Garcia March 21, 2023 • 7 min read